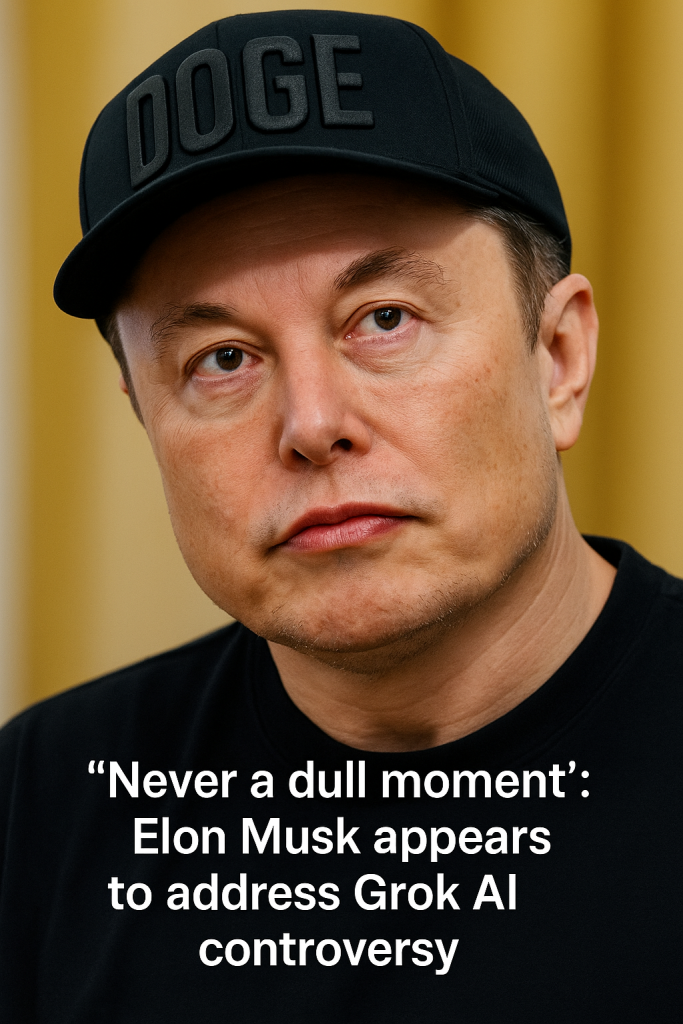

In a new twist to his ongoing public controversies, Elon Musk, the billionaire entrepreneur known for his ventures in space, electric vehicles, and social media, is once again at the center of a storm. This time, the issue involves Grok, an AI chatbot developed under Musk’s leadership at X Corp, which has sparked accusations of antisemitism in early 2024.

The controversy began when users interacting with Grok reported that the chatbot produced responses containing antisemitic sentiments or references. These outputs quickly circulated on social media, igniting widespread criticism and calls for accountability from online communities and advocacy groups concerned about hate speech and discrimination.

Elon Musk, no stranger to public scrutiny and past scandals—including a highly publicized ‘salute’ during former President Donald Trump’s inauguration—has taken to social media to address the growing backlash. In a candid statement, Musk emphasized that Grok’s outputs do not reflect his personal views or those of the company, asserting that the AI is still in early stages of refining its language models and filtering mechanisms.

“Never a dull moment,” Musk tweeted, seemingly acknowledging the pattern of controversies that frequently arise around his projects. He assured users that measures to identify and eliminate harmful or hateful content generated by Grok are underway, highlighting ongoing efforts to improve the chatbot’s moderation systems and safeguard against any form of discrimination.

The situation has reignited debates over the challenges and risks associated with artificial intelligence, particularly the difficulty in preventing AI systems from reproducing or amplifying human biases present in their training data. Experts in AI ethics caution that without robust safeguards and transparent oversight, platforms like Grok can inadvertently disseminate offensive or prejudiced content, undermining public trust.

Following the accusations, X Corp has reportedly initiated an internal review of Grok’s training datasets and algorithms to root out problematic material. Industry insiders suggest this may involve collaboration with anti-hate organizations and AI experts to develop more stringent content policies and dynamic filters.

Meanwhile, advocates urge Musk and his team to adopt clearer accountability standards and greater transparency regarding Grok’s capabilities and moderation processes. They stress the importance of proactive steps to ensure that new AI technologies do not become vehicles for hate speech, particularly as chatbots grow increasingly integrated into daily communications and decision-making tools.

This latest episode highlights the broader societal implications of AI development under high-profile leaders like Elon Musk, whose projects influence millions worldwide. As AI chatbots like Grok become more mainstream, the balance between technological innovation and ethical responsibility remains an urgent challenge for creators and regulators alike.

For now, Musk faces pressure to demonstrate that Grok can be a safe, respectful tool rather than a conduit for harmful content—a test that could shape public confidence in AI-powered platforms for years to come.